If you have used ChatGPT, Claude, or any modern AI tool, you have interacted with a Transformer. It is the specific architecture that turned Artificial Intelligence from a stumbling toddler into a fluent, reasoning assistant.

But what exactly is a Transformer? And what is this “Attention” mechanism everyone keeps talking about?

Today, we are going to strip away the complex math and look at the engine under the hood of the AI revolution.

The Problem: Why Old AI Was Like a Forgetful translator

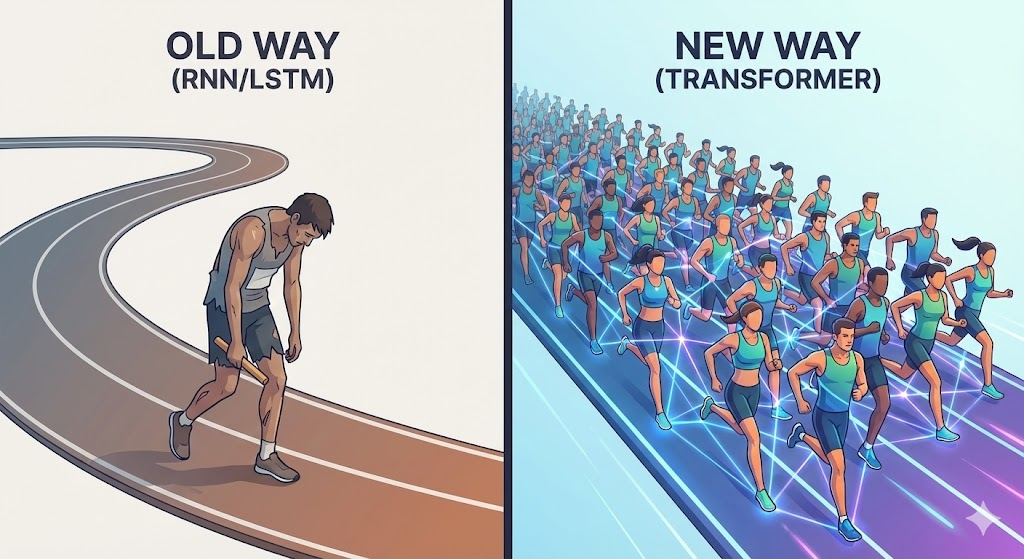

Before Transformers arrived on the scene (circa 2017), AI language models processed text sequentially—one word at a time, from left to right. This was called a Recurrent Neural Network (RNN).

Imagine reading a book through a tube where you can only see one word at a time. By the time you reach the end of a long sentence, you might have forgotten the beginning.

Real World Analogy: The Telephone Game Think of old AI models like playing the game of “Telephone.” You whisper a message to the person next to you, and they pass it on. By the time the message reaches the 20th person, the original meaning (“The sky is blue”) might have morphed into something totally different (“The guy has the flu”). The context gets lost over distance.

This limitation made it nearly impossible for AI to write long essays or understand complex documents. It simply couldn’t “remember” enough context.

Enter The Transformer: Parallel Processing Powerhouse

The “Transformer” architecture changed everything by introducing Parallelism. Instead of reading one word at a time, a Transformer reads the entire sentence (or paragraph) at once.

It doesn’t wait for word #1 to finish before looking at word #2. It ingests the whole sequence simultaneously. This allows it to understand the relationship between words instantly, regardless of how far apart they are in the sentence.

Real World Analogy: The Team of Translators Imagine you need to translate a massive document.

- The Old Way: You hire one translator who reads line by line. It takes forever, and they get tired.

- The Transformer Way: You hire 100 translators. You give the entire document to the group at once. Everyone looks at different parts simultaneously, but they all communicate with each other instantly to ensure consistency. The job is done in a fraction of the time with higher accuracy.

The Secret Sauce: The “Attention” Mechanism

Being able to read everything at once is great, but it creates a lot of noise. If you look at every word with equal importance, you get overwhelmed. This is where Attention (specifically “Self-Attention”) comes in.

Attention allows the model to decide which words are most important to understand the current context.

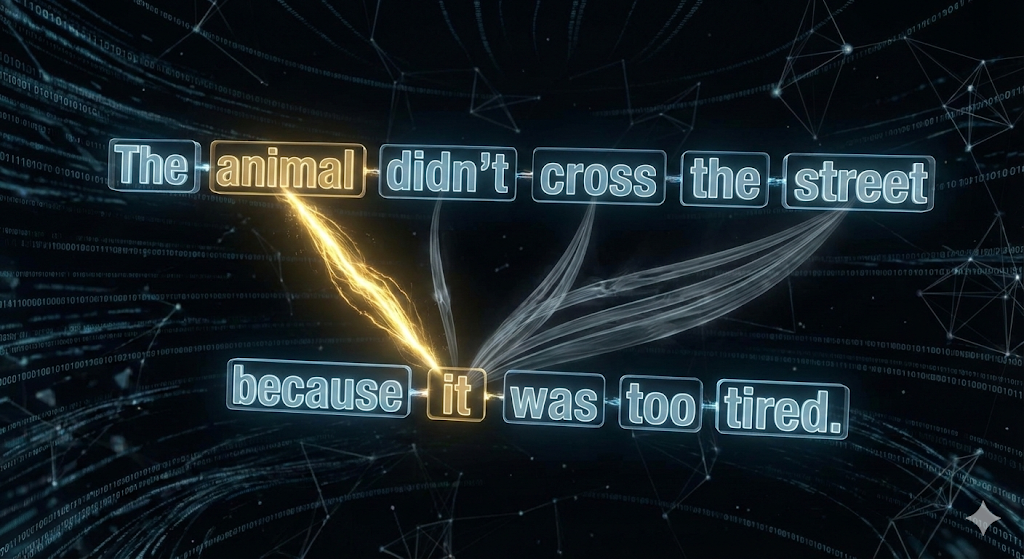

For example, take the sentence: “The animal didn’t cross the street because it was too tired.”

To a human, it’s obvious that “it” refers to the animal, not the street. To a machine, this is incredibly difficult. Does the street get tired? Does the animal?

The Attention Mechanism allows the model to assign a “weight” (or importance score) to the connection between “it” and “animal.”

Real World Analogy: The Cocktail Party Effect Imagine you are at a loud, crowded party. Hundreds of people are talking at once.

- Suddenly, someone across the room shouts your name.

- Your brain instantly tunes out the background noise and focuses (pays attention) entirely on that one voice.

You are still hearing the background noise, but your brain has assigned it a “low weight” while assigning the voice calling your name a “high weight.”

That is exactly what an AI does. When generating a response, it “shines a spotlight” on the specific words in your prompt that matter most, ignoring the fluff.

Why This Matters for Business

Understanding Transformers isn’t just academic; it explains why modern tools are so capable.

- Better Search (Semantic Search): Because of Attention, search engines now understand intent, not just keywords. If you search “best dress for a summer wedding,” it understands the concept of “summer” and “wedding” are linked to “dress,” rather than just looking for pages where those words appear randomly.

- Personalization: In e-commerce, a Transformer model can look at a user’s entire click history at once (parallelism) and pay attention to specific high-intent items to recommend the perfect product.

- Contextual Chatbots: Customer support bots can now remember what you said five minutes ago because the “Attention window” allows them to look back at previous messages instantly.

Summary

The leap from “dumb” chatbots to “smart” AI Assistants happened because we stopped trying to process language one word at a time.

- Transformers allow computers to read whole libraries at once (Parallelism).

- Attention gives them the ability to ignore the noise and focus on what matters (Context).

Together, they form the foundation of the Generative AI era we are living in today.